Two Critical CVEs Just Blew Open the MCP Ecosystem, And Developers Were the Target

The Model Context Protocol was supposed to solve the integration problem. Instead of every AI application building custom connectors for every data source and tool, MCP would provide a universal standard, what its creators called “USB-C for AI.” By mid-2025, the protocol had gained significant traction. Major AI platforms were adopting it. Thousands of MCP servers were being deployed. The ecosystem was growing fast.

But ecosystems built on speed have a tendency to outrun their security foundations. And in July 2025, two critical vulnerabilities in MCP’s own reference implementations proved that the protocol’s biggest security risks weren’t theoretical. They were sitting in the tools developers used every day.

The vulnerabilities nobody expected to find in reference code

CVE-2025-49596 hit first. Discovered by researchers at Oligo Security, this vulnerability affected the MCP Inspector, the official debugging tool that developers used to test and troubleshoot MCP server connections. The CVSS score: 9.4 out of 10. Critical severity.

The attack vector was disturbingly simple. MCP Inspector’s default configuration bound to 0.0.0.0:6277: meaning it listened on all network interfaces, not just localhost. It had no authentication mechanism. Its CORS policy was permissive. An attacker only needed to trick a developer into visiting a malicious website while the Inspector was running. From there, the attacker could exploit a combination of DNS rebinding and cross-site request forgery to achieve full remote code execution on the developer’s machine.

Avi Lumelsky, an AI security researcher at Oligo Security who discovered the flaw, explained the attack chain: the vulnerability leveraged a “0.0.0.0-day” flaw combined with DNS rebinding to bypass browser security boundaries. The attacker’s malicious page would resolve to a domain pointing at 0.0.0.0, gaining access to the locally running Inspector. From there, the attacker could send arbitrary commands to any MCP server the Inspector was connected to.

All versions of MCP Inspector prior to 0.14.1 were vulnerable. At the time, the tool had more than 4,000 GitHub stars and approximately 38,000 weekly downloads.

The second vulnerability was arguably worse. CVE-2025-6514, also a critical remote code execution flaw scoring 9.6 CVSS, targeted mcp-remote, the npm package that enabled remote MCP server connections. Discovered by JFrog’s security research team, this vulnerability affected a package with over 558,000 downloads. It used a similar attack pattern: a local proxy server that lacked proper access controls, exploitable through browser-based attacks when developers visited malicious web pages.

Why reference implementations matter more than you think

There is a reasonable question to ask here: why do vulnerabilities in developer tooling matter so much? Developers aren’t production systems. Testing tools aren’t customer-facing.

The answer lies in how modern software ecosystems actually work. Reference implementations set the security standard for everything built on top of them. When Anthropic published MCP Inspector and mcp-remote, they weren’t just shipping tools. They were establishing patterns. Developers copied those patterns into their own implementations. If the reference code didn’t authenticate connections, developers building their own MCP tools assumed authentication wasn’t necessary. If the reference code bound to all interfaces by default, that became the default everywhere.

Saumil Shah, a researcher at Qualys who published a detailed technical analysis of CVE-2025-49596, described the fundamental problem: MCP Inspector exposed “a web-based interface that interacts with MCP servers” without adequate security controls, creating “an attack surface that could be exploited remotely through a web browser.” The gap between what the protocol specified and what the implementations enforced was vast.

The other reason developer tooling vulnerabilities matter is supply chain risk. A compromised developer machine is a stepping stone to everything that developer has access to: source code repositories, deployment credentials, production infrastructure, customer data. The same logic that made SolarWinds devastating applies here. You don’t need to attack the fortress directly if you can compromise the builder.

Shachar Menashe, Senior Director of JFrog Security Research, noted in the CVE-2025-6514 disclosurethat the vulnerability could allow attackers to “execute arbitrary code on the victim’s machine” simply by enticing a developer to visit a crafted webpage. For a package downloaded more than half a million times, the blast radius was substantial.

The timing tells the real story

What makes these vulnerabilities particularly significant isn’t just their severity scores. It’s the timeline.

Anthropic released MCP as an open standard in November 2024. By June 2025, the protocol had been adopted by major AI platforms. The MCP specification was updated in June 2025 to mandate OAuth 2.1 for authentication, an acknowledgment that the original specification had left authentication as essentially optional. The critical CVEs were reported to Anthropic in March 2025 and patched on June 13, 2025 with the release of MCP Inspector version 0.14.1.

The patched version added session-based authentication, HTTP header validation, and DNS rebinding protection. These were not edge-case security features. They were fundamentals, the kind of controls that should have been present from day one.

Ridge Security’s threat research team published an analysis noting that the vulnerability revealed “critical gaps in how AI tooling components are secured during development,” and argued that “the very tools designed to accelerate AI development could become vectors for compromise.” The researchers highlighted that many organizations had deployed MCP Inspector in development environments that were connected to production networks, multiplying the risk.

Consider the sequence: a protocol ships without mandatory authentication. Reference implementations ship without basic access controls. An ecosystem of thousands of servers grows around those patterns. Six months later, someone discovers that the official tools have critical remote code execution vulnerabilities exploitable from a web browser. By the time patches arrive, the insecure patterns have been replicated across the ecosystem.

This isn’t a story about two CVEs. It’s a story about what happens when protocol adoption outpaces protocol security.

The ecosystem amplification problem

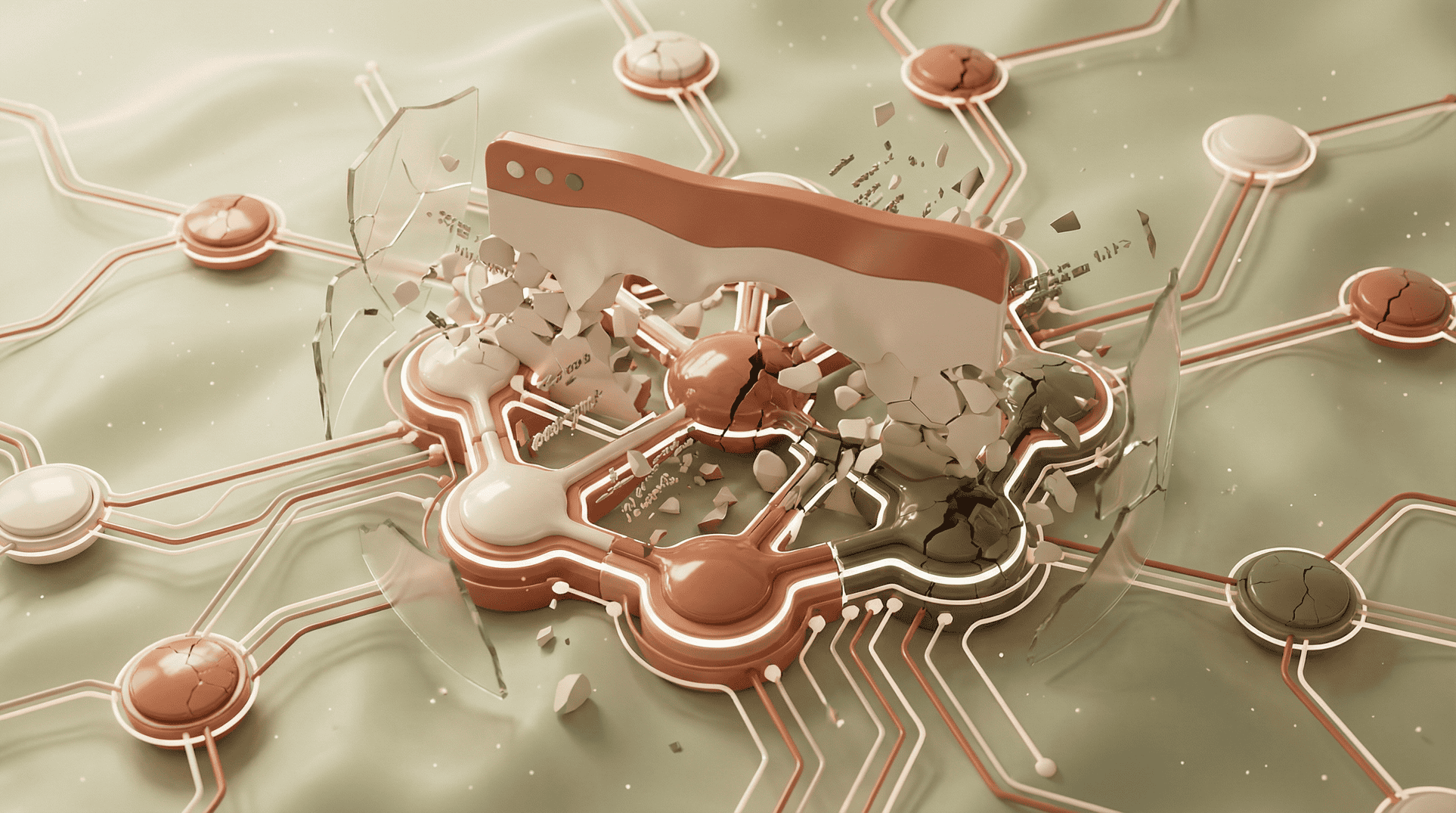

The two reference implementation vulnerabilities didn’t exist in isolation. They were part of a broader pattern of MCP security problems that emerged throughout 2025.

In a parallel timeline, researchers were discovering additional critical flaws. CVE-2025-6515 affected the mcp-remote package’s handling of SSE connections. CVE-2025-53967 targeted authentication token handling. When SOCRadar published a comprehensive analysis of MCP’s vulnerability landscape, they counted five critical CVEs disclosed within a six-month window, all in core ecosystem components.

David Onwukwe, a principal solutions engineer at BlueRock Security, conducted an analysis of over 7,000 MCP servers and found that 36.7% had potential SSRF (Server-Side Request Forgery) vulnerabilities. The study focused on Microsoft’s MarkItDown MCP server, one of the most popular in the ecosystem with 85,000 GitHub stars, and demonstrated how an unrestricted URI parameter could be used to access cloud metadata services, steal AWS credentials, and potentially achieve full account takeover.

Harold Byun, BlueRock’s chief product officer, told Dark Reading that the MarkItDown vulnerability illustrated a fundamental gap in how MCP servers were being built: “It could be a file that is internal; it could be any other file that is accessible via the network, to that given server, any other type of arbitrary call that you would want to make.” The server simply didn’t restrict what URIs it would fetch.

The pattern was consistent: MCP servers were being built with the assumption that all inputs were trustworthy. That assumption was inherited from the reference implementations, which didn’t model untrusted input as a threat.

What this means for anyone deploying MCP

I spend my days architecting AI-powered systems that connect to external tools and data sources. MCP’s value proposition is real, standardized integration is genuinely better than one-off connectors for every API. But the July 2025 CVEs revealed something that should concern any enterprise security team: the protocol’s ecosystem was built on a foundation that treated security as a feature to add later, not a constraint to build around.

The gap between protocol specification and implementation security isn’t unique to MCP. It’s happened with every major protocol adoption cycle. HTTPS took years to go from “optional” to “mandatory.” OAuth had its own series of implementation vulnerabilities before the ecosystem stabilized. But MCP’s ecosystem is growing faster than those predecessors did, and the attack surface is fundamentally different. MCP servers don’t just exchange data. They execute actions. A compromised MCP server isn’t a data leak, it’s a code execution engine under someone else’s control.

The practical takeaway for enterprise teams evaluating or deploying MCP is uncomfortable but necessary: you cannot trust the ecosystem to be secure by default. Not yet.

What to do Monday morning

If your organization is deploying or evaluating MCP, these are the actions that matter right now:

Patch immediately. Update MCP Inspector to version 0.14.1 or later. Update mcp-remote to version 0.1.16 or later. Inventory every MCP-related package in your development environment and verify versions against known CVEs.

Audit your network configuration. Any MCP server or tool binding to 0.0.0.0 is listening on all interfaces. Restrict to localhost unless remote access is explicitly required and properly authenticated. Check firewall rules between development environments and production networks.

Implement MCP server allowlists. Don’t allow developers or AI agents to connect to arbitrary MCP servers. Maintain a vetted list of approved servers with known security postures. Reject connections to unreviewed servers by default.

Treat MCP servers as untrusted input sources. Every URI, parameter, and tool call that passes through an MCP server should be validated and sanitized. Implement URL allowlisting for any server that fetches external resources. Block access to cloud metadata endpoints (169.254.169.254) from MCP server processes.

Isolate development MCP instances from production. The July CVEs targeted developer machines specifically. If your developers test MCP integrations on machines with access to production credentials or networks, a browser-based exploit can reach production. Network segmentation isn’t optional.

The lesson the industry keeps re-learning

The MCP reference implementation vulnerabilities are, in one sense, nothing new. Every major protocol has had its “oh, we forgot to secure the default configuration” moment. But the speed and scale of MCP adoption mean the ecosystem didn’t have the luxury of learning gradually.

As of mid-2025, there were already more than 10,000 active MCP servers deployed across the AI ecosystem. The June 2025 specification update mandating OAuth 2.1 was the right move. But mandating authentication in the spec doesn’t retroactively secure the thousands of servers already built without it.

The fundamental question facing enterprise security teams isn’t whether MCP is a good idea. It is. Standardized AI-tool integration beats the alternative. The question is whether your security posture accounts for the reality that MCP’s ecosystem matured faster than its security did, and that the gap between specification and implementation is where the attackers live.