The Trust Boundary Problem: Identity Architecture for Autonomous AI

Every enterprise security architect knows how to manage permissions. Role-based access control, attribute-based policies, least privilege principles. These patterns have served us well for decades. But they were designed for a world where human users initiated every consequential action.

That world is ending.

As AI agents move from assistants to autonomous actors, they don't just need permission to act. They need identity. And that distinction matters more than most organizations realize.

The Permission Fallacy

When organizations first deploy AI agents, the instinct is to treat them like any other system integration. Give the agent a service account. Scope its permissions. Monitor its access patterns. Problem solved.

Except it isn't.

Permissions answer the question: what is this system allowed to do? Identity answers a different question: who is responsible when this system acts?

In traditional automation, the distinction didn't matter because a human was always upstream of every decision. The script had permissions, but the human who triggered it had identity.

Autonomous AI agents break this assumption. They observe, decide, and act without human initiation. When an agent negotiates with a vendor's agent, approves a transaction, or modifies a production system, the permission model tells us whether the action was technically allowed. It doesn't tell us who owns the outcome.

Identity Is Accountability Made Visible

Identity architecture for AI agents requires answering questions that permission models don't address.

First, provenance. Where did this agent come from? What model is it running? Who trained it, and on what data? When was it last updated? These aren't metadata curiosities. They're the audit trail that lets an organization trace a bad decision back to its source.

Second, attestation. How do we know this agent is what it claims to be? When Agent A communicates with Agent B across organizational boundaries, what prevents impersonation? Traditional API authentication assumes the caller is a system under your control. Agentic interactions assume nothing.

Third, lifecycle. Agents aren't static. They learn, adapt, and sometimes drift. An agent that passed validation six months ago may behave differently today. Identity architecture must include mechanisms for continuous verification, not just initial provisioning.

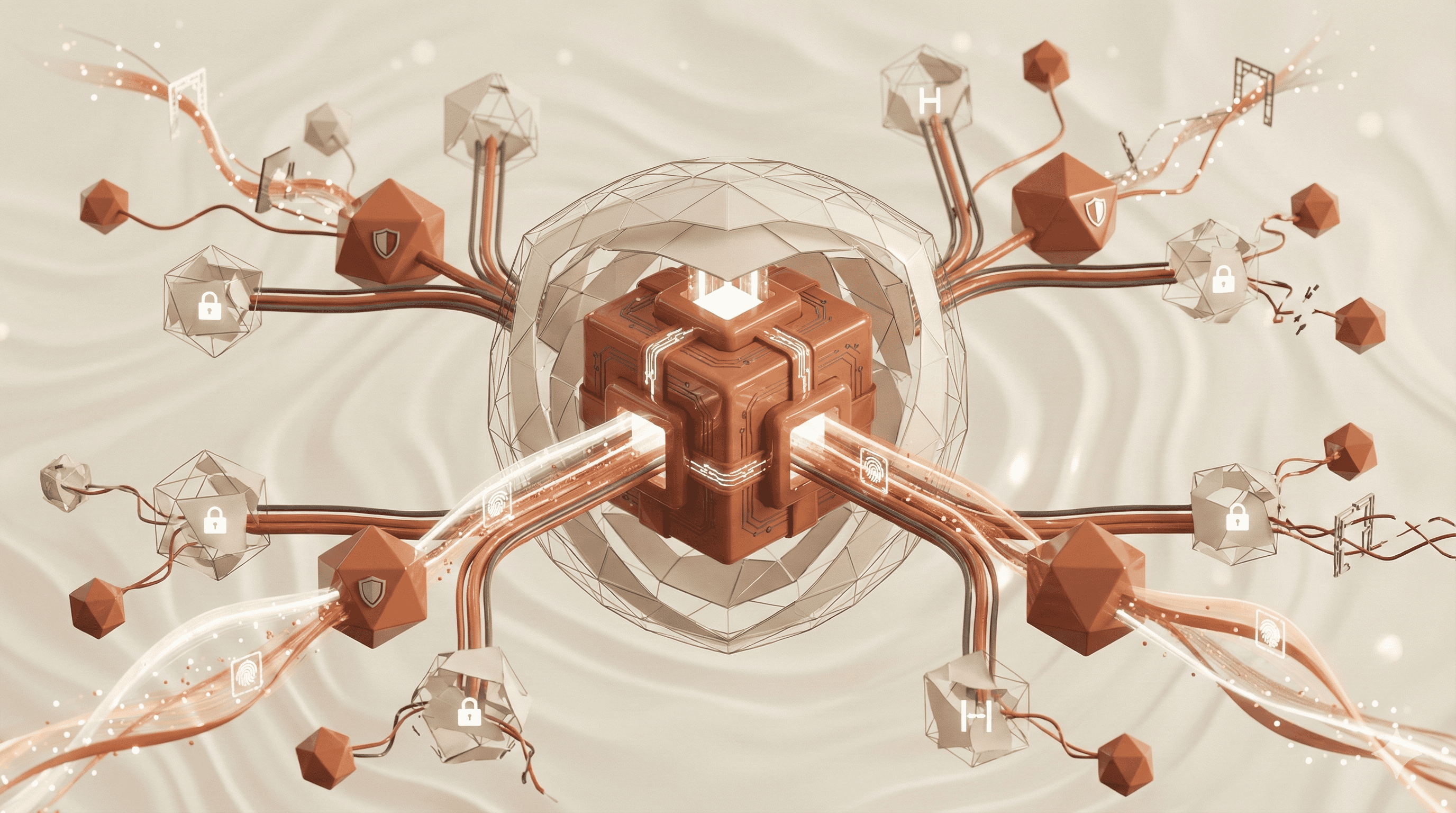

A single AI agent with scoped permissions is manageable. A hundred agents interacting across an enterprise is a coordination problem. A thousand agents negotiating across organizational boundaries is a trust architecture challenge that permissions alone cannot solve.

Consider what happens when your procurement agent interacts with a supplier's fulfillment agent. Both agents have permissions within their respective organizations. But the interaction itself exists in a trust boundary that neither organization fully controls.

Who verifies the other agent's identity? Who audits the negotiation? Who is liable if the interaction produces an outcome neither organization intended?

Permission models assume a central authority that grants and revokes access. Identity architecture acknowledges that in a world of autonomous agents, there is no central authority. Trust must be negotiated, verified, and continuously maintained between parties who may never fully control each other's systems.

What Constitutes an AI Agent Identity (At Minimum)

An enterprise-grade AI agent identity must include:

- A cryptographic identifier bound to a specific agent or model instance

- Verifiable provenance, including origin, training constraints, and operator

- A declared operational scope defining domains of authority

- A revocable trust status independent of permissions

- A persistent audit identity that survives redeployment and scaling events

Without these primitives, organizations cannot reliably assign responsibility for autonomous actions.

Why This Matters at Scale

A single AI agent with scoped permissions is manageable. A hundred agents interacting across an enterprise is a coordination problem. A thousand agents negotiating across organizational boundaries is a trust architecture challenge that permissions alone cannot solve.

Consider what happens when your procurement agent interacts with a supplier's fulfillment agent. Both agents have permissions within their respective organizations. But the interaction itself exists in a trust boundary that neither organization fully controls.

Who verifies the other agent's identity? Who audits the negotiation? Who is liable if the interaction produces an outcome neither organization intended?

Permission models assume a central authority that grants and revokes access. Identity architecture acknowledges that in a world of autonomous agents, there is no central authority. Trust must be negotiated, verified, and continuously maintained between parties who may never fully control each other's systems.

The Design Shift: Identity-First Architecture

Organizations preparing for autonomous AI need to move from permission-first to identity-first design. This means several concrete changes.

Agents need verifiable credentials, not just API keys. They need attestation mechanisms that can prove their provenance, training lineage, and current state to external parties. They need audit trails that survive organizational boundaries.

More fundamentally, organizations need to define what it means for an agent to act on their behalf. Not just what the agent is allowed to do, but under what conditions, with what oversight, and with what accountability chain.

This isn't a security enhancement. It's a prerequisite for operating AI systems at scale without creating governance chaos.

The Question Nobody Wants to Ask

The uncomfortable truth is that most organizations deploying autonomous AI haven't thought through identity architecture at all. They've focused on capability. Can the agent perform the task? They've added guardrails. Does the agent stay within bounds? But they haven't answered the fundamental question.

When this agent acts, whose action is it?

Until organizations can answer that question clearly, with audit trails and accountability chains that survive scrutiny, they're not ready for autonomous AI. They're just running automation and hoping nothing goes wrong.

Hope isn't an architecture pattern. Identity is.