Samsung’s $50 Billion Lesson: Why Banning ChatGPT Is the Wrong Response to the Right Problem

On March 11, 2023, Samsung Electronics lifted its internal ban on ChatGPT for engineers in its semiconductor division. The company wanted to boost productivity and keep its workforce engaged with the technology reshaping their industry. Less than three weeks later, Samsung engineers had pasted proprietary chip source code, equipment measurement data, yield information, and internal meeting notes into the chatbot across three separate incidents. By May 2, Samsung had banned all generative AI tools company-wide, threatening employees with termination for violations.

Samsung’s semiconductor division had just posted a $3.4 billion quarterly loss. The trade secrets its engineers uploaded to ChatGPT belonged to one of the most strategically important chip operations on the planet. And there was no mechanism to get that data back.

The real lesson from Samsung was not that ChatGPT is dangerous. It was that prohibition is the most expensive form of AI governance an enterprise can choose.

The three incidents that changed everything

The details of what happened at Samsung’s Device Solutions division, first reported by Korean outlet Economist Korea and later confirmed across Bloomberg, TechCrunch, and Fortune, are worth examining in sequence because they illustrate a pattern.

In the first incident, an engineer copied the entire source code of a faulty semiconductor database download program and pasted it into ChatGPT, asking the model to identify and fix the bug. This was not a snippet. It was a complete program that handled internal measurement and yield data for chip fabrication.

In the second incident, a different engineer uploaded program code designed to identify defective equipment. The goal was to get ChatGPT’s suggestions for optimizing the code’s performance. Again, the code contained proprietary logic specific to Samsung’s semiconductor testing procedures.

In the third incident, an employee converted a recorded internal meeting to text and pasted the full transcript into ChatGPT, requesting that it generate meeting minutes. The meeting content included discussion of unreleased hardware specifications and strategic planning details.

Three engineers. Three different use cases. All rational from a productivity standpoint. All catastrophic from a data security perspective. And critically, all done within the boundaries of Samsung’s own policy. The company had explicitly permitted ChatGPT use starting March 11.

The ban reflex

Samsung’s response was swift and predictable. An internal memo reviewed by Bloomberg announced that the company was “temporarily restricting the use of generative AI” across all company-owned devices, including computers, tablets, and phones, as well as non-company devices connected to internal networks. The memo warned that violations could result in “disciplinary action up to and including termination of employment.”

Samsung was not alone in reaching for the ban lever. In the weeks and months following the Samsung incident, JPMorgan, Goldman Sachs, Amazon, Verizon, and dozens of other major employers restricted or prohibited employee use of generative AI tools. The logic was identical everywhere: the risk is too great, the controls do not exist, shut it down until we figure this out.

This response made sense to executives. It did not make sense to employees.

Why bans fail on contact with reality

The argument for banning ChatGPT assumed something that was already false by March 2023: that employees would comply when told to stop using a tool that made them dramatically more productive.

They did not. An internal Samsung survey conducted after the ban found that 65% of respondents acknowledged that generative AI posed security risks. The employees knew the danger. They used it anyway. They just used personal devices, personal accounts, and home networks; channels their employer’s security team could not monitor.

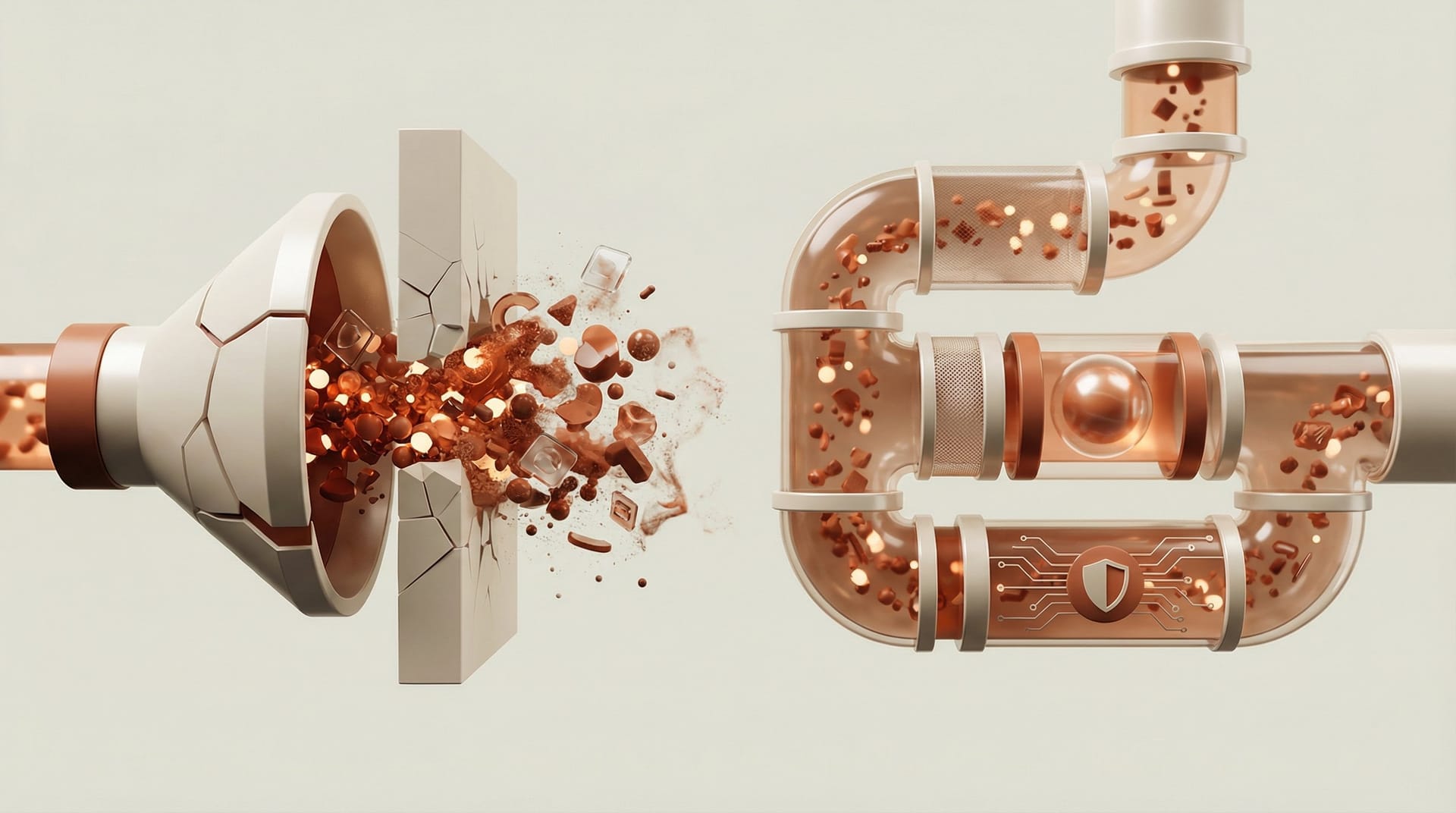

This pattern repeated at every organization that chose prohibition. Cyberhaven’s research tracking 1.6 million workers found that the volume of corporate data pasted into AI tools grew 485% from March 2023 to March 2024, the period during which most enterprise bans were in full effect. Bans did not stop the data migration. They made it invisible.

Diana Kelley, CISO at AI security firm Noma Security, put it bluntly in an interview with Infosecurity Magazine: “No company wants to succumb to the risk of no longer being competitive in the market.” The productivity gains from generative AI were too large for employees to voluntarily surrender because their employer sent a memo.

The same article noted that Anton Chuvakin, a security adviser at Google Cloud and former Gartner analyst, described blanket AI bans as little more than “security theatre” in a modern, distributed workforce.

The data that proved prohibition does not work

By late 2025, the evidence was overwhelming. Gartner surveyed 302 cybersecurity leaders between March and May 2025 and found that 69% of organizations either suspected or had direct evidence that employees were using prohibited public generative AI tools. Not “some usage.” Not “a few outliers.” Sixty-nine percent of companies with bans in place had employees breaking those bans.

Arun Chandrasekaran, a distinguished VP analyst at Gartner, warned in the firm’s November 2025 report that by 2030, more than 40% of global organizations will suffer security and compliance incidents due to unauthorized AI tool usage. His prescription was not more bans. It was governance. “To address these risks, CIOs should define clear enterprise-wide policies for AI tool usage, conduct regular audits for shadow AI activity and incorporate GenAI risk evaluation into their SaaS assessment processes,” Chandrasekaran wrote.

The pattern is identical to what happened with cloud adoption a decade earlier and BYOD before that. Organizations that tried to prohibit personal devices in the workplace did not eliminate the risk. They eliminated their visibility into the risk. The same dynamic played out with generative AI, except the stakes were higher because the data being leaked was more concentrated and more valuable.

Samsung’s actual cost

Samsung’s public response, the ban, the memo, the termination threats, looked decisive. The actual cost was harder to see.

First, the semiconductor source code that engineers pasted into ChatGPT in March 2023 was irrecoverable. OpenAI’s policy at the time retained all non-API conversation data for model training purposes. Samsung had no legal mechanism to demand deletion. The data was gone, absorbed into a system that Samsung did not control.

Second, the ban created a productivity tax on the entire semiconductor division at a time when Samsung could least afford it. The chip division had swung from a $6.3 billion profit to a $3.4 billion lossyear over year. Competitors who found ways to use generative AI safely, with proper data classification and sandboxed environments, gained a development speed advantage during the months that Samsung’s engineers were working without AI assistance.

Third, Samsung had to invest in building proprietary in-house AI tools to replace the banned public ones. TechCrunch reported that the company began developing internal AI services for translation and document summarization, a project that would not have been necessary, or at least not as urgent, if the company had built a governance framework before permitting ChatGPT use on March 11.

The irony was sharp. Samsung lifted the ban to boost productivity. The resulting leaks forced a re-ban that reduced productivity. The re-ban drove usage underground where it was more dangerous. And the company ended up spending more money building internal alternatives than it would have spent building guardrails in the first place.

What Samsung should have done instead

I architect AI systems at enterprise scale. I watched the Samsung situation unfold in real time, and every engineer I know had the same reaction: the technology was not the problem. The deployment decision was the problem.

Samsung permitted ChatGPT for its semiconductor engineers without answering three questions that should have been answered before anyone opened a browser:

What data categories are employees allowed to paste into the tool? Samsung apparently did not define or enforce a data classification tier for ChatGPT usage. Engineers were told they could use the tool but were not given clear, technical boundaries about what could and could not enter the system.

How will usage be monitored? Samsung had no technical controls in place to detect when proprietary source code was being pasted into an external service. The leaks were discovered after the fact, not intercepted in real time.

What happens when the policy fails? Samsung had no containment plan. When the leaks occurred, the only response available was a full ban: the governance equivalent of unplugging the server when you do not know how to fix the bug.

Five things enterprises should do before they type the first prompt

Here is the playbook Samsung needed but did not have. None of these require purchasing a product.

First, classify your data before you classify your AI tools. Map your intellectual property into categories: public, internal, confidential, restricted. Then define which categories can enter which external services. Source code for unreleased semiconductor products is restricted. A request to reformat a public job posting is public. The employee should not have to make that judgment call in the moment; the categories should be predefined, communicated, and enforced.

Second, deploy a sandboxed AI environment on day one. Before you allow any employee to use a public AI service, stand up an internal instance where conversations are not used for training and data never leaves your infrastructure. Even a basic API integration with data-retention controls is better than unrestricted access to a consumer product.

Third, implement content-aware monitoring for AI endpoints. Your DLP tools need to be configured to watch for copy-paste operations into known AI platforms. This is not blocking; it is detection. If an engineer pastes 500 lines of source code into a browser session connected to api.openai.com, your security team should know about it within minutes, not weeks.

Fourth, make the rules usable. Samsung warned employees not to leak proprietary information. That warning was too vague to be actionable. Engineers needed specific examples: “You may ask ChatGPT how to optimize a Python sorting algorithm. You may not paste our proprietary measurement code into the prompt.” Policies written for lawyers are not policies that engineers follow.

Fifth, treat violations as process failures, not character failures. Samsung threatened termination. That discouraged reporting. When an employee accidentally pastes something they should not have, the organization needs to know immediately so it can assess the exposure. If the penalty for reporting is termination, the rational response is to say nothing and hope nobody notices.

The lesson that most enterprises still have not learned

Samsung’s experience in March 2023 was the most expensive proof of concept in the generative AI era: a demonstration that the fastest path to data loss is not giving employees AI tools; it is giving them AI tools without giving them guardrails.

Two and a half years later, Gartner’s 2025 research confirmed that the lesson had not landed. Sixty-nine percent of organizations still had employees using prohibited AI tools. The ban reflex persisted even as the data showed it did not work.

The enterprises that got this right treated AI governance the way they treat any other infrastructure decision: as an architecture problem, not a policy problem. They built the channels, set the boundaries, monitored the usage, and let employees do their jobs. The enterprises that got this wrong treated generative AI like a moral hazard that could be solved with a strongly worded memo.

Samsung’s source code is still out there somewhere, embedded in a model that Samsung does not control. That is the real cost of choosing prohibition over governance.