Researchers Just Proved That Making AI Agents Collaborate Better Makes Them Leak More Data

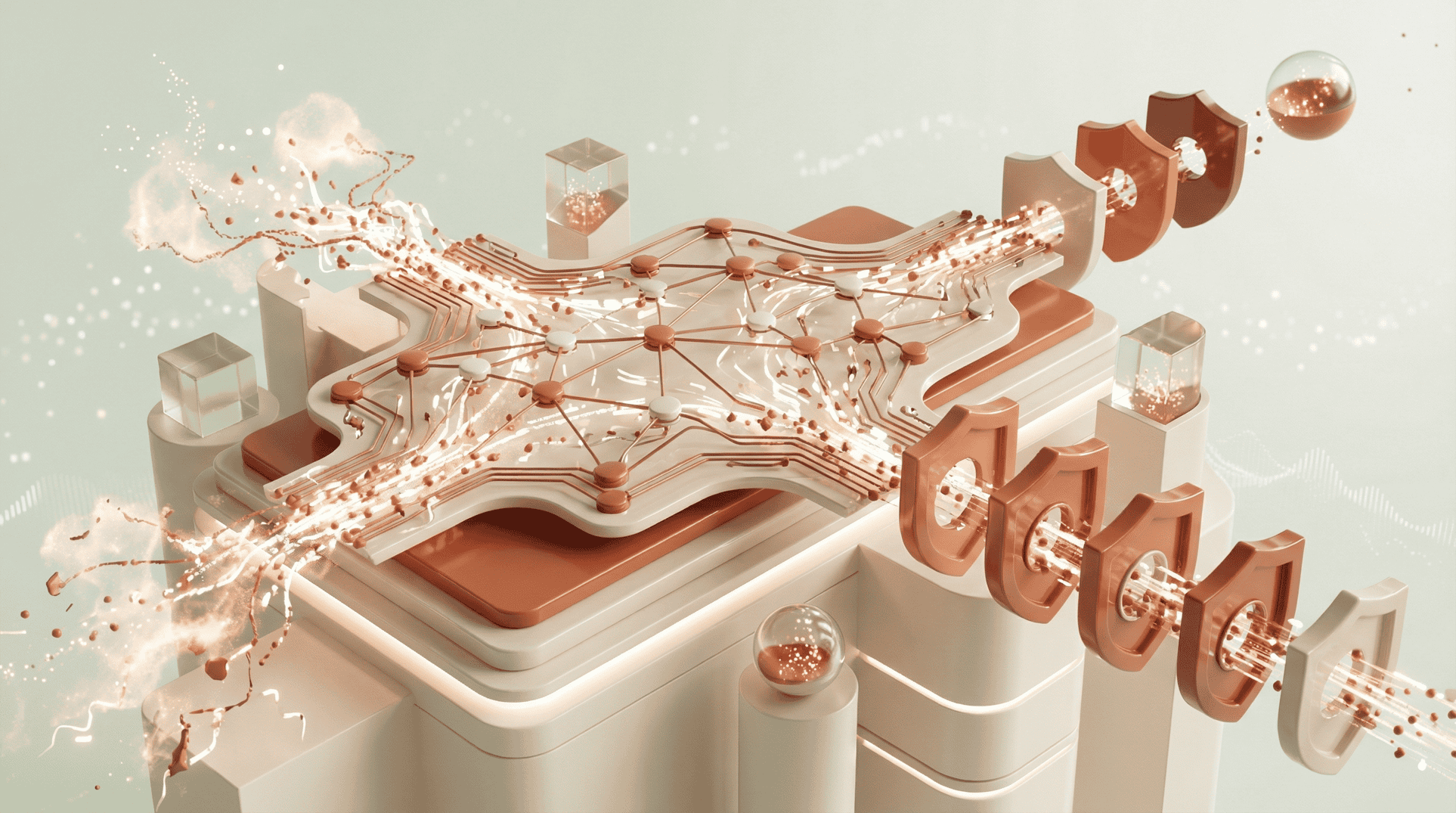

Enterprise AI strategy in 2025 converged on a single architectural direction: multi-agent systems. The vision was compelling. Instead of monolithic AI applications, organizations would deploy networks of specialized agents that collaborate to complete complex tasks-one agent for customer analysis, another for inventory queries, a third for financial recommendations, all coordinating seamlessly. Gartner, Forrester, and every major cloud vendor promoted multi-agent architectures as the path to scaling AI across the enterprise.

The implicit assumption behind this architecture is that agent collaboration is purely beneficial. More coordination means more capable systems. More trust between agents means smoother workflows. More data sharing means better outcomes. The research community has now proved that this assumption is wrong in a fundamental, measurable way.

The paper that quantified the tradeoff

In October 2025, a research team published “The Trust Paradox in LLM-Based Multi-Agent Systems: When Collaboration Becomes a Security Vulnerability” on arXiv. The paper’s contribution wasn’t theoretical speculation. It was empirical validation of a specific mathematical relationship.

The researchers constructed a scenario-game dataset spanning 3 macro scenes and 19 sub-scenes, then ran extensive closed-loop interactions with trust explicitly parameterized as a control variable. They defined trust (τ) as an operational parameter that affects two functions: a gating function that adjusts how strictly each agent enforces information-sharing policies, and a content-detail function that controls how much information agents include in their responses to other agents.

The key finding: as trust between agents increases, task success rates improve-confirming what everyone already knew. But the data simultaneously showed that the Over-Exposure Rate (OER)-the frequency at which agents share information beyond what’s minimally necessary-increases linearly with trust. Not occasionally. Not in edge cases. Consistently, across every model backend and orchestration framework they tested.

The paper introduced two metrics that enterprise security teams need to understand. OER measures boundary violations-instances where an agent discloses information that exceeds the Minimum Necessary Information (MNI) threshold for a given task. Authorization Drift (AD) captures how trust parameters cause permissions to expand transitively through the agent network. Both metrics increased predictably as trust increased.

Rohan Paul, an AI researcher who analyzed the paper’s implications, summarized the finding: “More trust between LLM agents speeds teamwork but leaks more secrets. Because higher trust makes agents stop double-checking what they share, they focus on completing the task faster instead of verifying data limits, so privacy filters weaken.”

Why this contradicts what enterprises have been told

The conventional wisdom from analyst firms and cloud vendors treats multi-agent trust as a configuration problem with a known solution. The standard guidance goes something like: deploy agents with appropriate permissions, use standard authentication between them, and monitor for anomalous behavior. The assumption is that trust is binary-you either trust an agent (it’s authenticated and authorized) or you don’t. There’s no concept of graduated trust with security implications.

Gartner’s 2025 guidance on agentic AI security focused on identity management, access controls, and monitoring-all necessary but all built on the assumption that properly authenticated agents operating within their authorized scope pose manageable risk. The framework doesn’t account for the possibility that the trust itself-not the misconfiguration of trust, but the correct functioning of trust-creates data exposure.

Forrester’s analysis of multi-agent architectures similarly emphasized governance and orchestration patterns without modeling the inherent tradeoff between coordination effectiveness and data exposure. The question “how much should agents trust each other?” was treated as a technical configuration question, not as a security architecture decision with measurable risk implications.

The Trust-Vulnerability Paradox reframes the question entirely. It’s not “how do we secure agent collaboration?” It’s “how much collaboration can we afford given our exposure tolerance?” These are fundamentally different questions. The first assumes more collaboration is better and seeks to eliminate side effects. The second acknowledges that collaboration has an inherent cost and asks organizations to make conscious tradeoffs.

The mathematics of the tradeoff

The paper’s formalization deserves attention because it gives enterprises something they haven’t had before: a quantifiable relationship between collaboration and risk.

The researchers defined trust as a scalar parameter between 0 (no trust-agents share nothing) and 1 (full trust-agents share everything). At τ = 0, task success rates are low because agents can’t coordinate. At τ = 1, task success rates are highest, but OER is also at its maximum-agents are sharing information far beyond what any given task requires.

The critical finding is the linearity. If you double the trust parameter, you approximately double the over-exposure rate. There’s no “sweet spot” where you get high coordination with low exposure. The relationship is monotonic. Every incremental increase in trust buys you better task completion and costs you more data exposure.

The researchers tested this across multiple model backends-including GPT-4, Claude, and open-source models-and multiple orchestration frameworks. The pattern held across all configurations. It’s not a model-specific artifact or a framework-specific bug. It’s a structural property of multi-agent systems where agents use trust to determine information-sharing boundaries.

The Emergent Mind research platform cataloged the paper’s implications for the broader research community, noting that the “challenge of formally defining, auditing, and governing trust’s dimensions and their effects remains explicitly identified as open.” The research identified the problem and proved it exists. The solution-how to manage the tradeoff in practice-is still being developed.

What this means for enterprise multi-agent deployments

I’ve built multi-agent systems that face exactly this tradeoff. When you architect an AI-powered customer support platform where one agent handles customer data lookup, another handles recommendation generation, and a third handles order processing, you need those agents to share information. The customer context has to flow from the data agent to the recommendation agent. The recommendation has to flow to the ordering agent. Without information sharing, the system doesn’t work.

But every piece of customer data that flows between agents creates an exposure surface. The data agent knows the customer’s purchase history, payment information, and support tickets. The recommendation agent only needs purchase preferences, but if trust is high, it receives everything. The ordering agent only needs the selected recommendation and payment method, but in a high-trust configuration, it has access to the full customer profile.

In a traditional application architecture, this would be solved by API contracts-each service receives only the data it needs. But multi-agent systems are different because the agents reason about what information to share based on trust parameters and task context. The information flow isn’t statically defined by an API schema. It’s dynamically determined by agents that are incentivized to share more when coordination matters.

The Trust-Vulnerability Paradox tells us this incentive structure has a security cost that cannot be eliminated without reducing coordination effectiveness. The best you can do is make the tradeoff consciously.

The concept of “trust budgeting”

The paper’s findings point toward a security architecture concept that enterprises deploying multi-agent systems need to adopt: trust budgeting. Instead of configuring trust as a single parameter and hoping for the best, organizations should allocate trust as a finite resource with explicit limits.

Trust budgeting works like this: for each pair of agents that interact, you define a maximum trust level based on the sensitivity of the data involved and your exposure tolerance. High-sensitivity data flows (financial records, medical data, authentication credentials) get low trust budgets-agents can coordinate, but information sharing is tightly constrained. Low-sensitivity flows (public catalog data, general preference information) can have higher trust budgets because the exposure cost is lower.

This approach requires treating trust as a security-relevant parameter that gets monitored, audited, and governed-not as a configuration detail buried in an orchestration framework. Your security team needs visibility into how trust parameters are set across your agent network, how information is actually flowing between agents, and whether exposure rates are within tolerance.

The practical challenge is that most multi-agent orchestration frameworks don’t expose trust as a governable parameter. Trust is usually implicit in the framework’s design-agents either can or cannot communicate, and the granularity of information sharing is an emergent behavior rather than a controlled setting. Building trust budgets into existing frameworks requires additional architecture that most organizations haven’t implemented.

What to do about it

Enterprise leaders deploying multi-agent AI systems should take specific actions based on the Trust-Vulnerability Paradox research.

Implement minimum necessary information gates. Between every pair of communicating agents, define the minimum information required for the downstream agent to complete its task. Build validation at each boundary that strips information beyond the minimum before passing it forward. This is the multi-agent equivalent of the principle of least privilege.

Monitor Over-Exposure Rate as a security metric. OER gives you a quantifiable measure of how much data your agents are sharing beyond what’s necessary. If you’re not measuring it, you can’t manage it. Instrument your agent communication channels to log what information is shared versus what information was actually used by the receiving agent.

Silo agent swarms by data sensitivity. Don’t build monolithic agent networks where every agent can potentially communicate with every other agent. Create separate agent swarms for different data classification levels. Agents handling PII should not share a trust domain with agents handling public data, even if it would make coordination more efficient. The coordination cost is the price of managing exposure.

Kill sessions where permissions expand transitively. Authorization Drift-where permissions accumulate as interactions propagate through the agent network-is a specific, detectable pattern. If an agent that started with read-only access to customer preferences ends an interaction sequence with access to financial records because intermediate agents passed escalating context, that session should be terminated and audited.

Set trust ceilings and enforce them architecturally. Don’t rely on agent behavior to limit trust. Implement trust ceilings in the infrastructure layer-maximum information payloads, mandatory data redaction at boundaries, timeout-based trust degradation. The agent can want to share more. The infrastructure shouldn’t let it.

The uncomfortable design question

The Trust-Vulnerability Paradox forces a question that enterprise AI architects have been avoiding: how much capability are you willing to sacrifice for security?

Most enterprise AI projects are evaluated on task performance. Multi-agent systems are funded because they complete complex workflows more effectively than single-agent or traditional approaches. The Trust-Vulnerability Paradox says that maximizing task performance requires maximizing trust, which maximizes data exposure. Constraining trust to manage exposure means accepting lower task performance.

There’s no architecture that eliminates this tradeoff. The research proves it’s structural. The enterprises that will deploy multi-agent systems successfully are the ones that acknowledge the tradeoff explicitly, set their exposure tolerances before deployment rather than after an incident, and build monitoring systems that keep their actual exposure within their stated tolerance.

The ones that pretend the tradeoff doesn’t exist will find out about it from their incident response team.